- PJ's Newsletter - AI Filmmaking

- Posts

- Gossip Goblin's Crazy Workflow for Building Original Worlds (200M+ Views)

Gossip Goblin's Crazy Workflow for Building Original Worlds (200M+ Views)

I got to interview Zach London on his entire process for creating "The Patchwright". His process is insane.

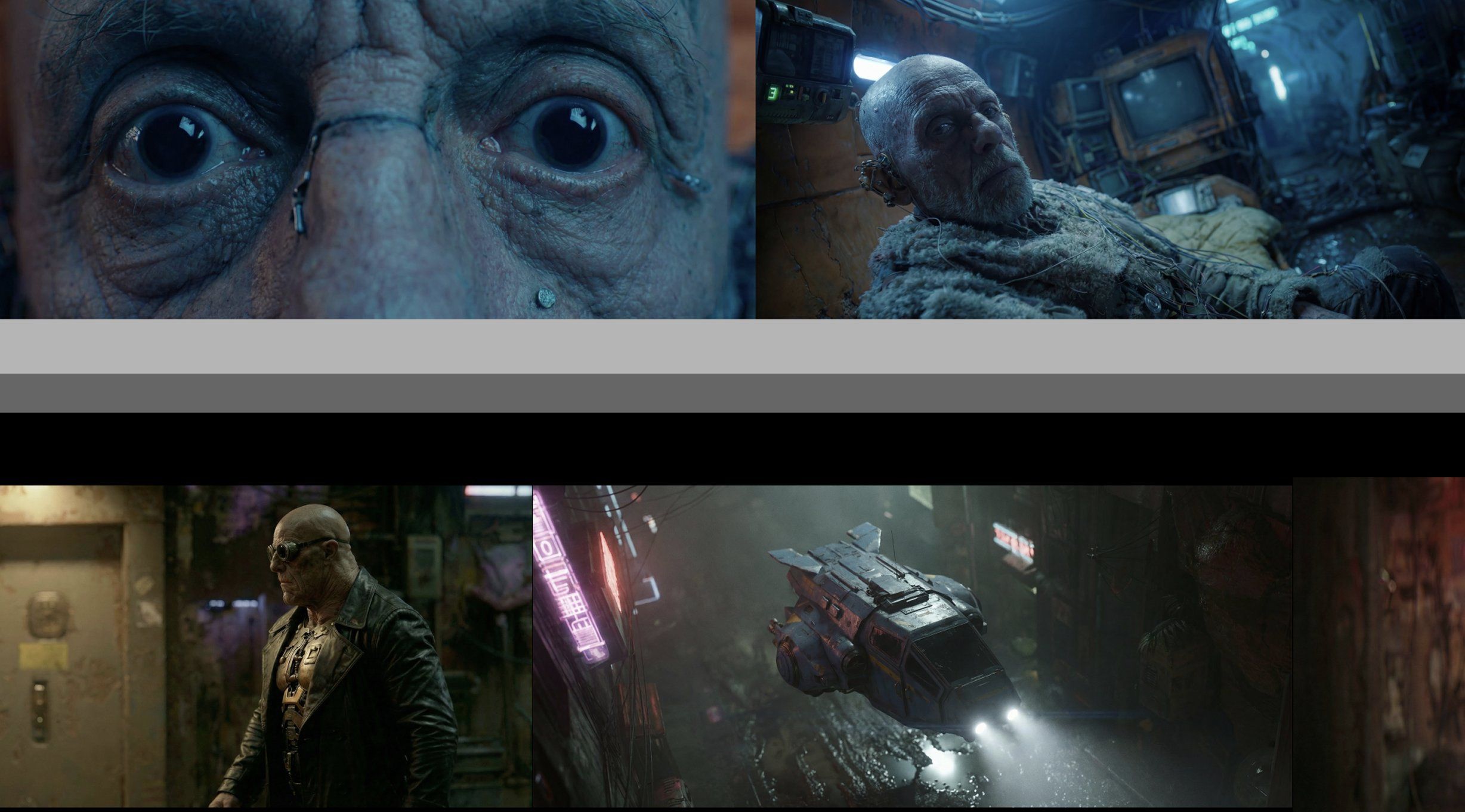

Many claim that Gossip Goblin is the best AI filmmaker in the world.

But was he featured on Last Week Tonight? Did John Oliver fondly call his film a “visual cacophony?” I don’t think so.

But I can say that his new film THE PATCHWRIGHT is a masterpiece (10M+ views).

The problem is, nobody knows how he actually makes these.

Until now.

He let me share every step of the workflow with you 🧵👇

First, check out the film in all it’s glory if you haven’t yet:

This is 4 months of work for 20 minutes of film, set in a world he's been building for years

The Patchwright didn't start from scratch.

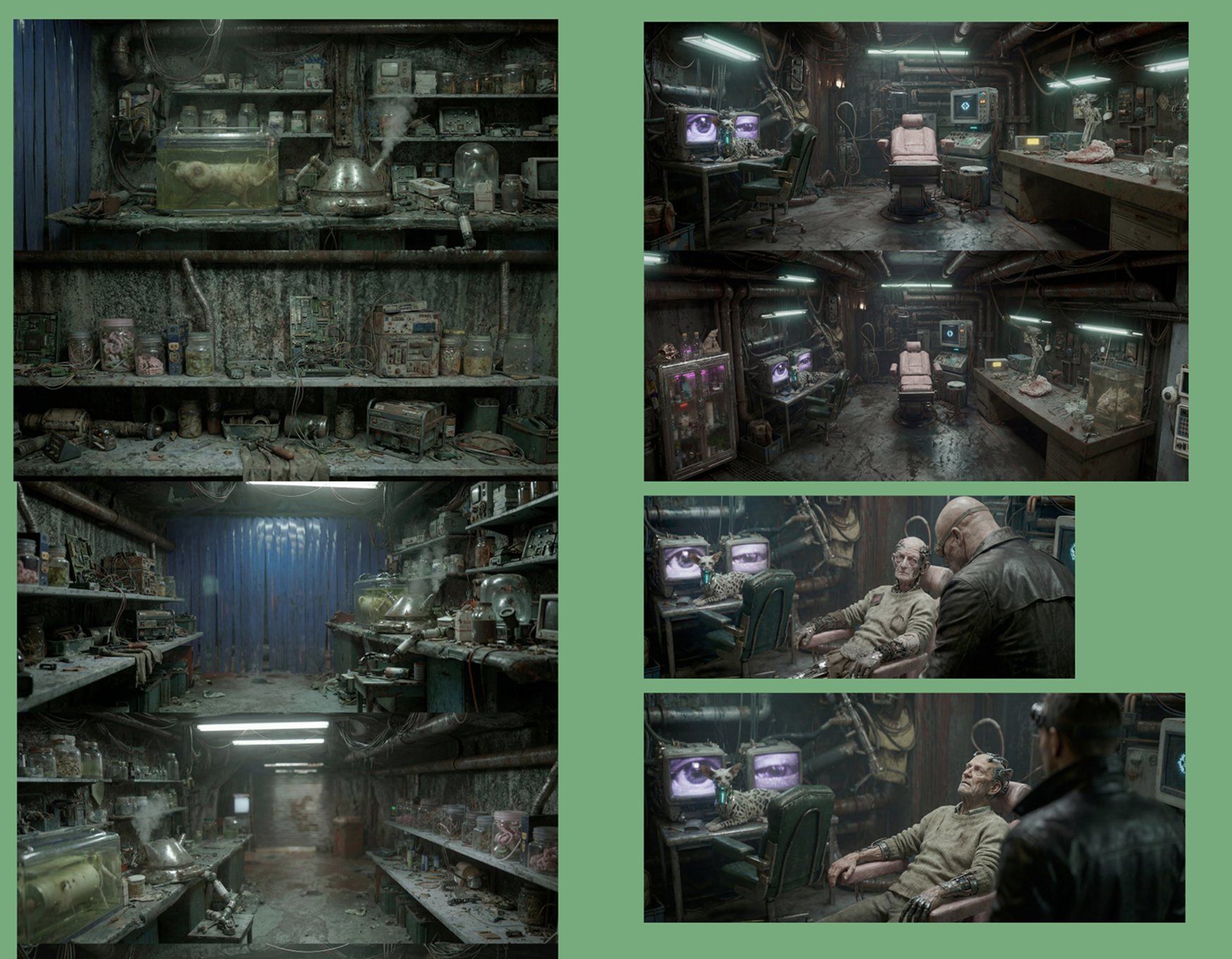

Zach has been making 2 to 3 episodes a week in this universe for 11 months, so by the time he sat down to write the film he already had tens of thousands of midjourney images to pull from, established characters, a custom alphabet, even a custom language.

People love to ask about the tools but honestly the accumulated story bible is doing a huge amount of the heavy lifting here.

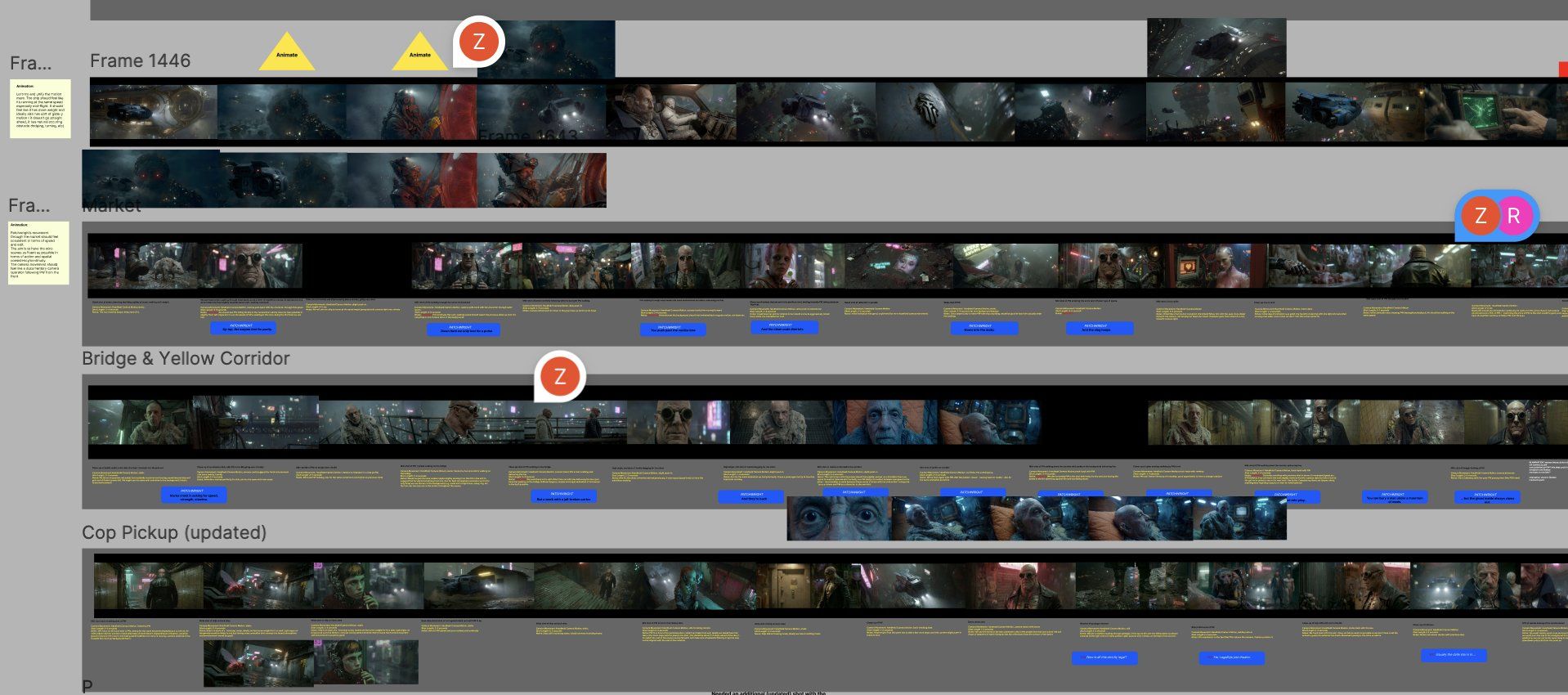

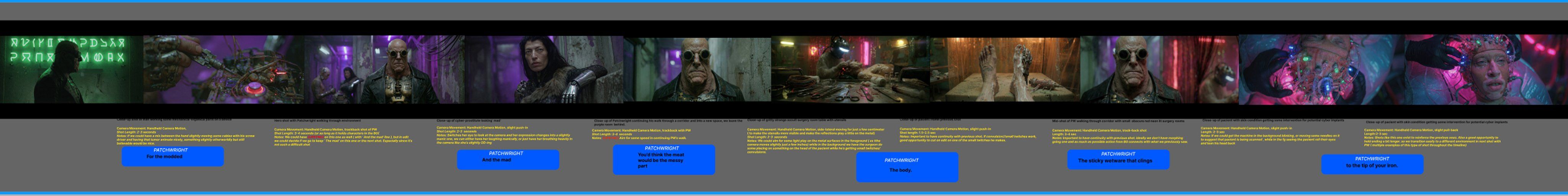

3/ Visual exploration follows the story beats

The film opens in a corporate penthouse, descends through an aerial ship sequence, and lands on the gritty street.

So they broke their aesthetic exploration down by altitude. Penthouse, ship interior, midair city, street level, wet market.

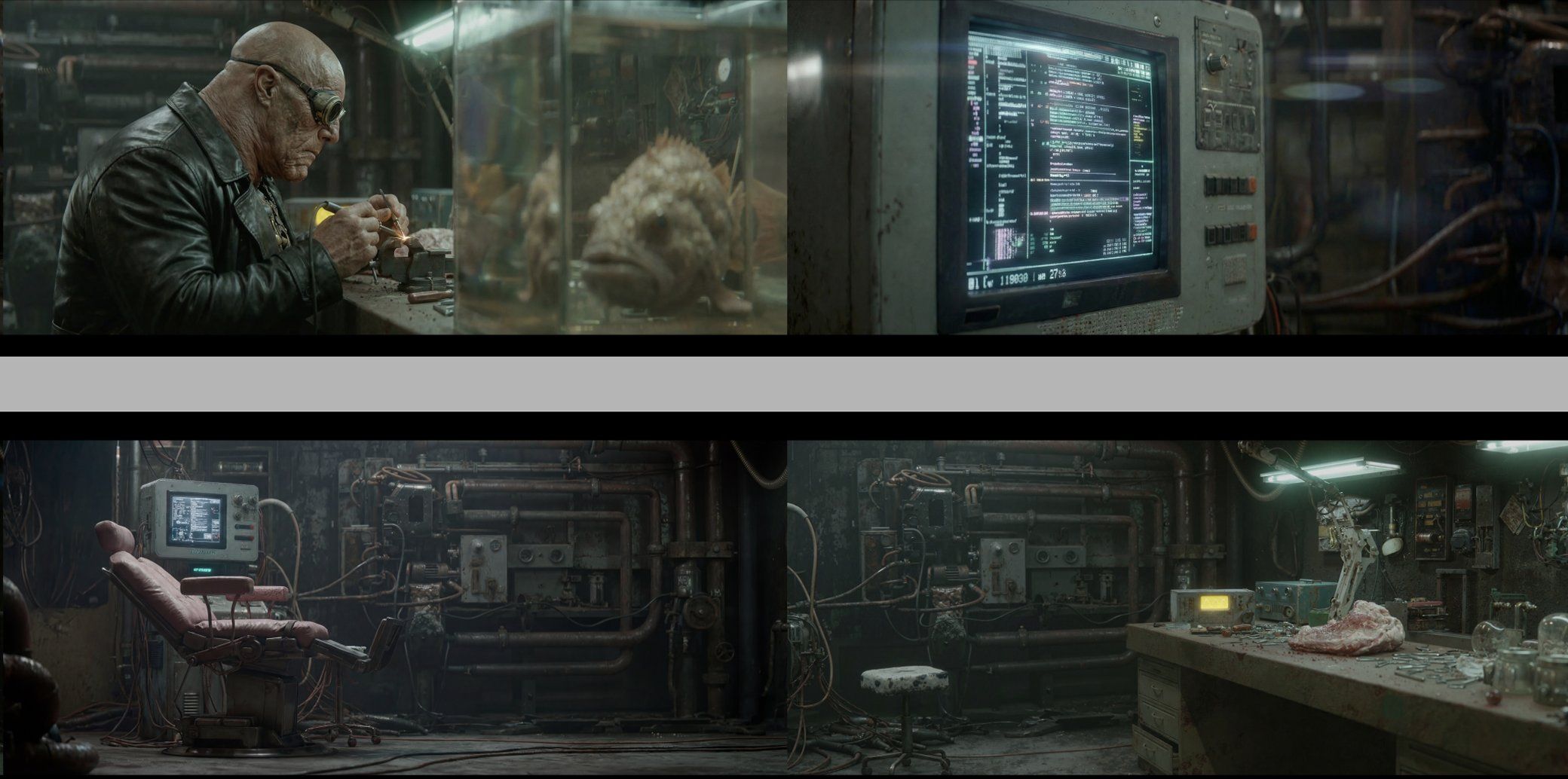

Before animating anything in Kling, each location got its own dedicated midjourney pass, and then a nano banana pass to insert consistent characters in those midjourney "plate shots".

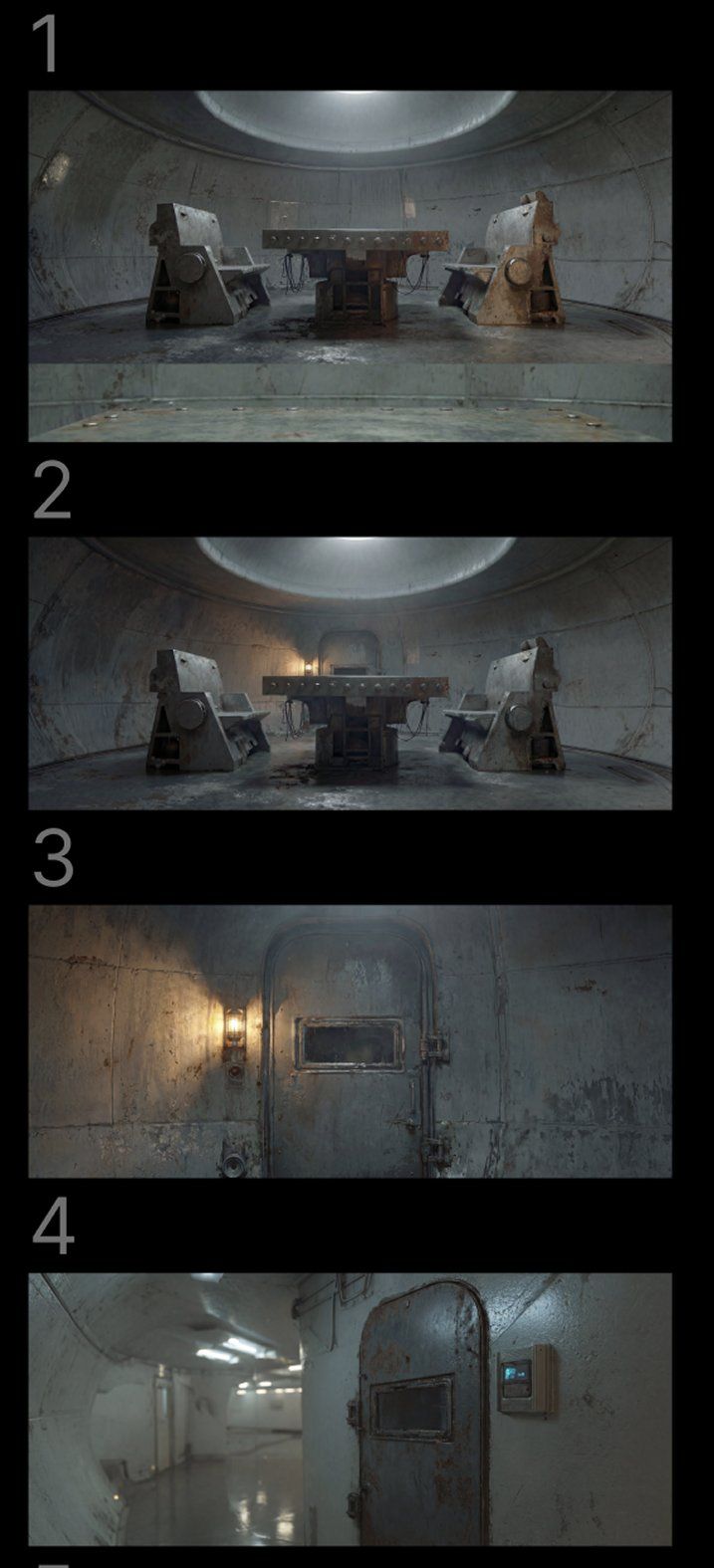

4/ Push past the AI default, even when it looks fine

The interrogation room could've been a crappy table and gray walls like every other interrogation room in every other film.

Instead they pushed for a panopticon. Circular, no orientation, with a structure on top looking down. Then they kit-bashed brutalist chair references in nano banana to fill in the details.

Whatever the default agent gives you is going to look like what it gave the next thousand people who asked.

5/ Midjourney v7 still beats 8.1 for this kind of work

Zach thinks 8.1 has been sanitized again and v7 is closer to the peak of what midjourney can actually do.

The real lever, though, isn't prompt syntax or some fancy JSON prompt. It's spending real time building custom profile codes that reflect your taste, then layering them with mood boards and style refs.

That's how the Instagram creators with truly distinct styles are doing it. Prompt templates alone don't really get you there.

6/ Midjourney explores latent space, nano banana is the photoshop

His exact quote: "no image starts in nano banana. Nano banana is just our photoshop."

Midjourney has the widest range of aesthetic possibility, the weird color palettes, the greebles, the texture you can't really get anywhere else.

Going straight to nano banana skips all of that and you end up with its default visual grammar, which is faster but a lot more generic.

7/ Lock in your hero shots, then connect the dots

For each environment, the team nails a handful of hero shots that establish the lighting and tone.

Once those are locked, they use nano banana to fill in everything between them.

The gradient of light as you move from the penthouse altitude down to the street level, for example, gets extrapolated from a few anchor frames rather than designed shot by shot.

8/ Color and tone refs do most of the grading before you ever animate

They imagined the wet market as Hong Kong meets Bangkok meets a Kowloon night market. Hazy, wet, makeshift.

They built specific color and lighting refs around that idea and baked something like 90% of the final grade into the images themselves.

So by the time anything is moving, the look is basically already there in the frame.

9/ Build the world

He avoids low hanging fruit like kanji lettering. They built their own alphabet plus a subscript based on Burmese, and the opening title literally morphs from alien script into their text.

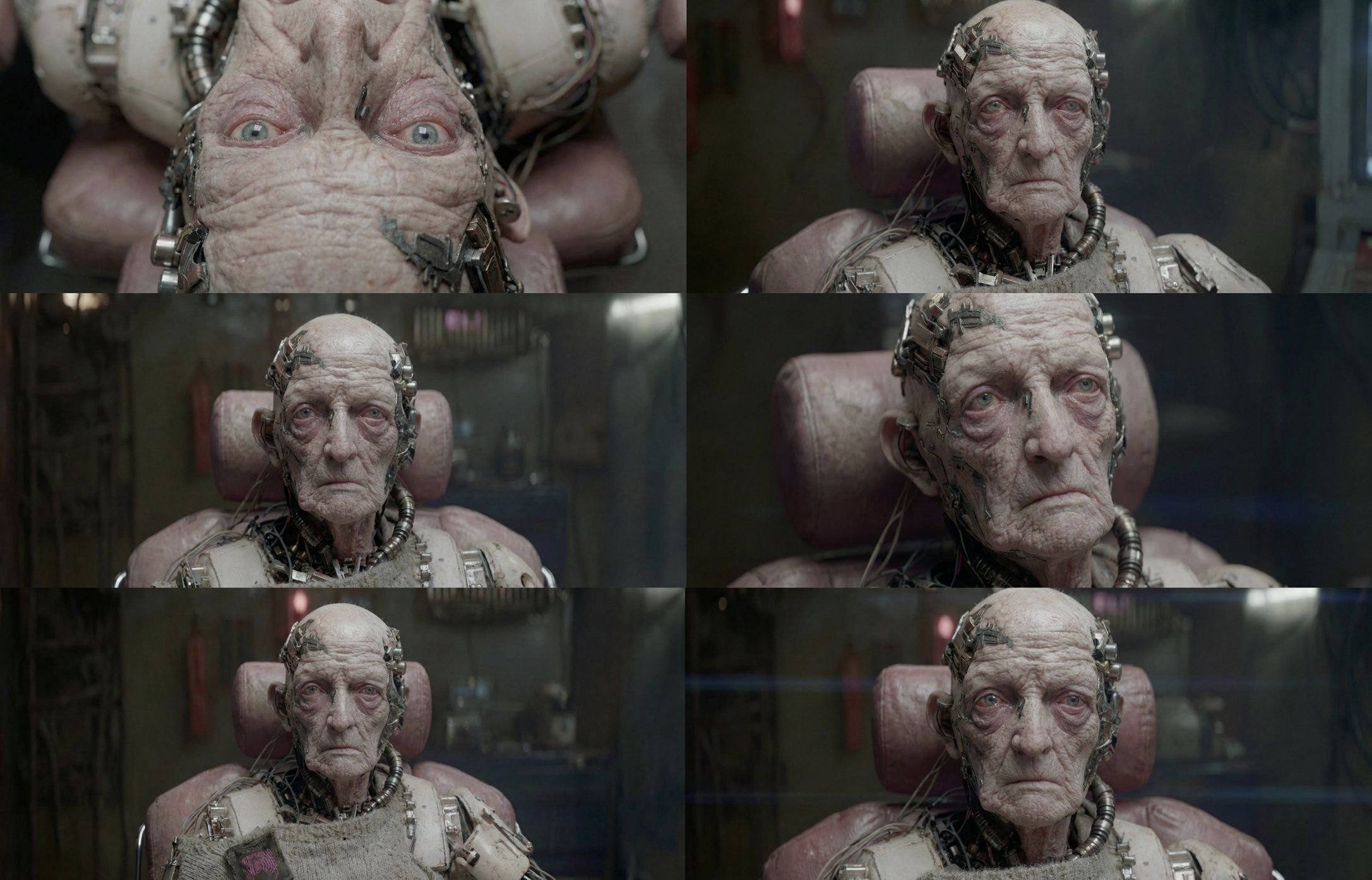

10/ Don't take anything for granted

The Patchwright doesn't drink from an IKEA mug.

They designed his tea kettle. The weird science experiments. The creature in formaldehyde on his shelf.

The textures of the workshop you barely see on camera.

Almost none of this exploration ended up in the final cut, but the small fraction that did is part of why the world feels lived-in instead of generated.

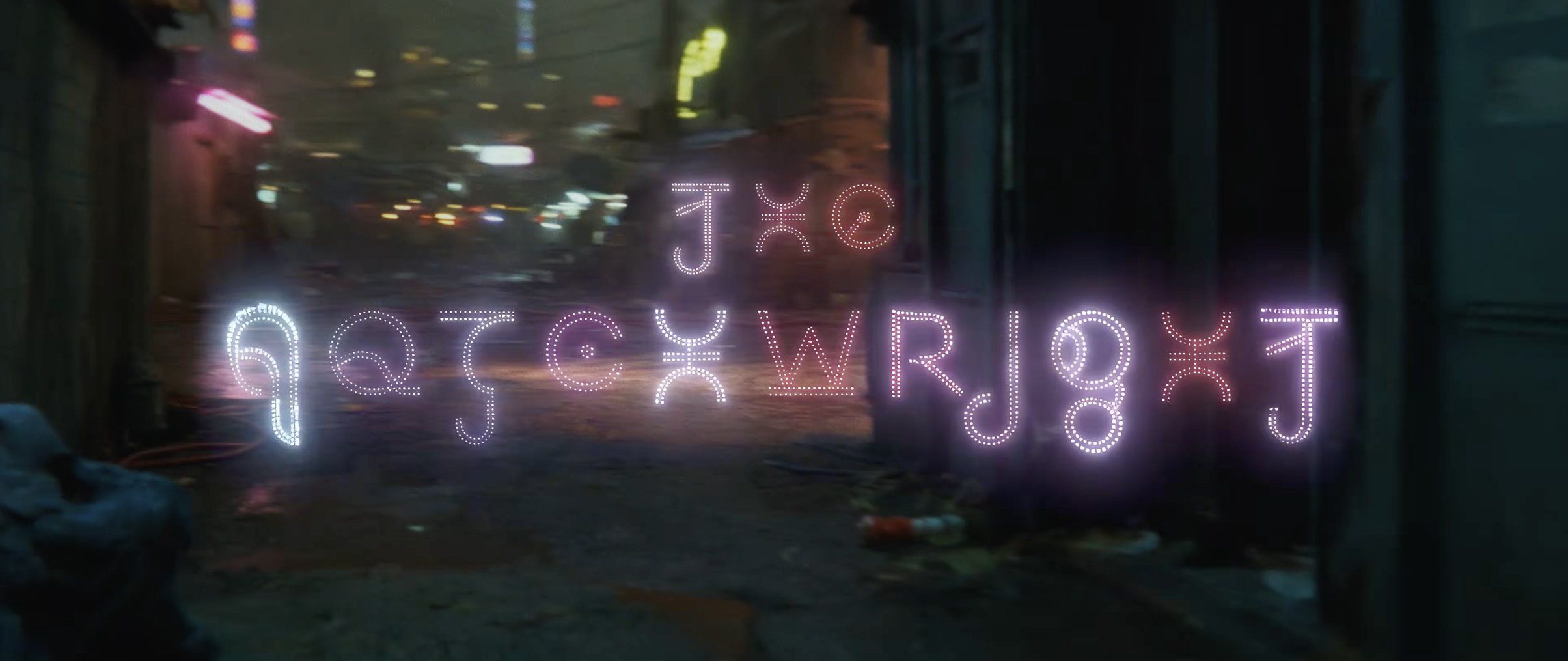

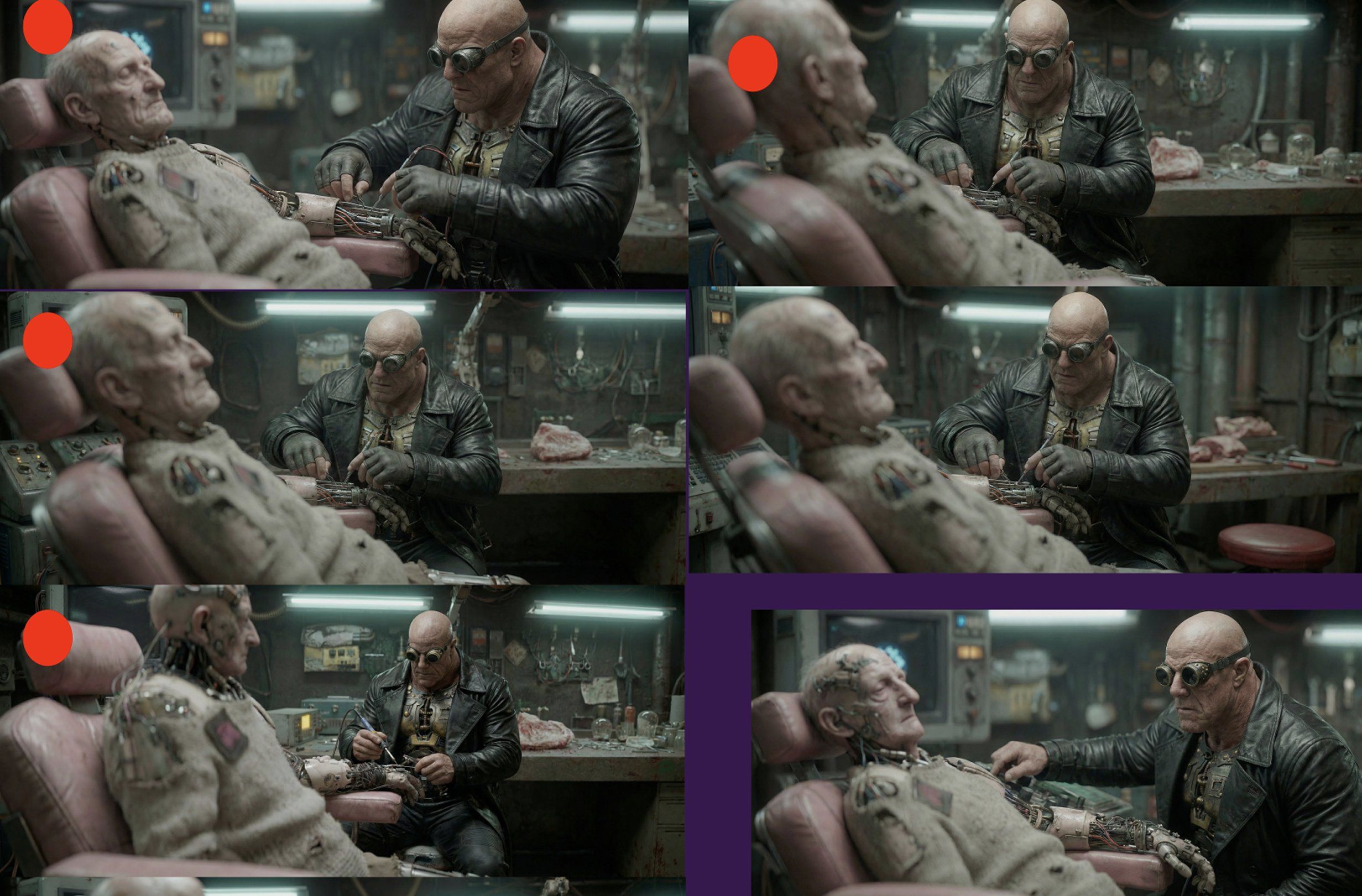

11/ Character work: no multi-shot, pure frame animations

The entire Patchwright was made frame by frame. They didn't use any omni-ref or multi-shot features.

He wants to control 100% of what we see on screen.

Going the hard route on this probably cost them a few months and is also a big part of why the film looks like itself rather than like everything else coming out right now.

12/ Modular character coverage, not a master sheet

For each character they generated close-ups, mediums, wides, and rear shots separately. Nano banana stitches those into environments shot by shot.

So when a scene needs the Patchwright walking through the market from behind, the recipe is back angle of the Patchwright, butcher character, environment plate, prompt.

You assemble the ingredients fresh each time instead of relying on one big reference sheet to carry the consistency.

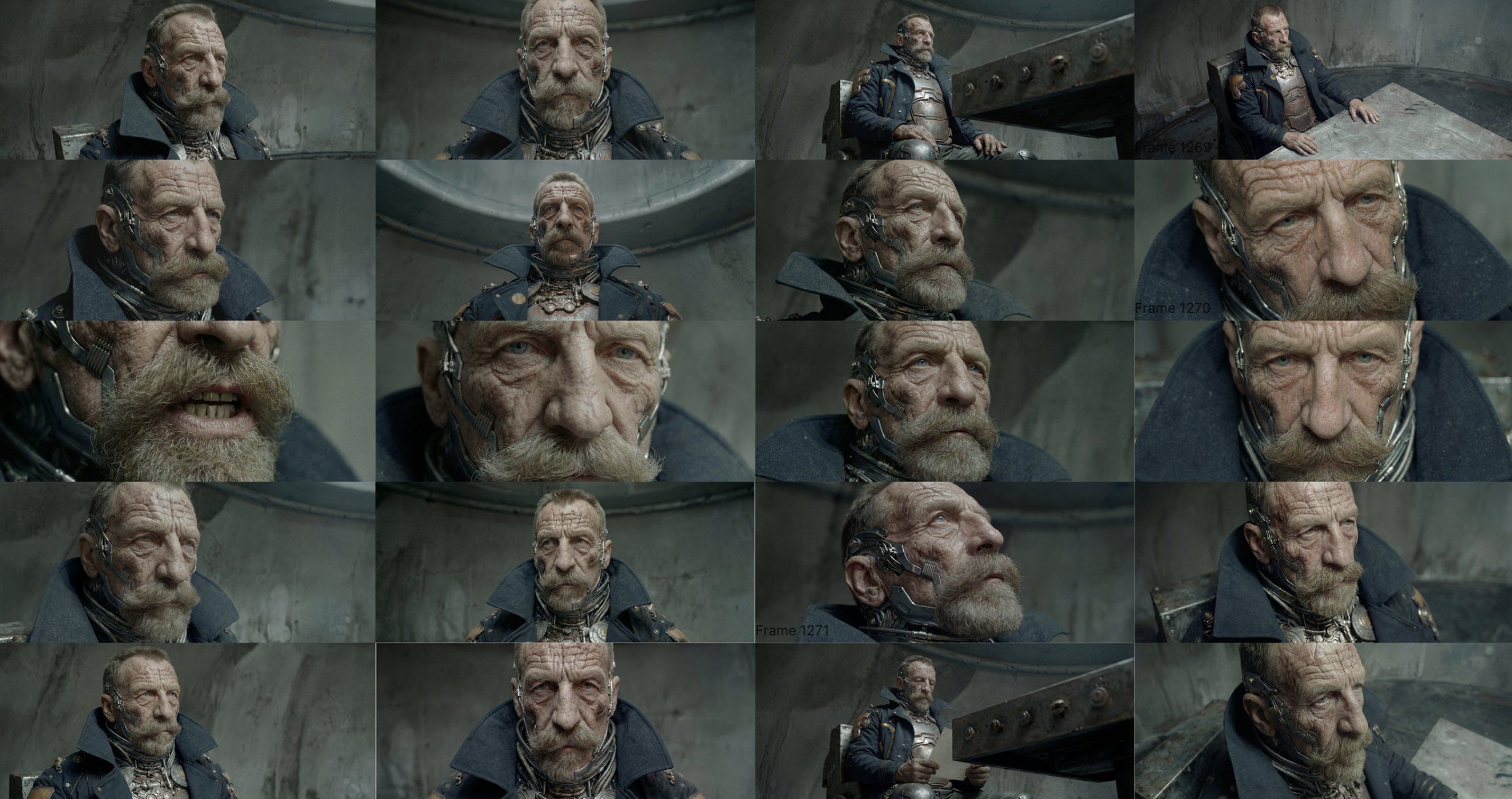

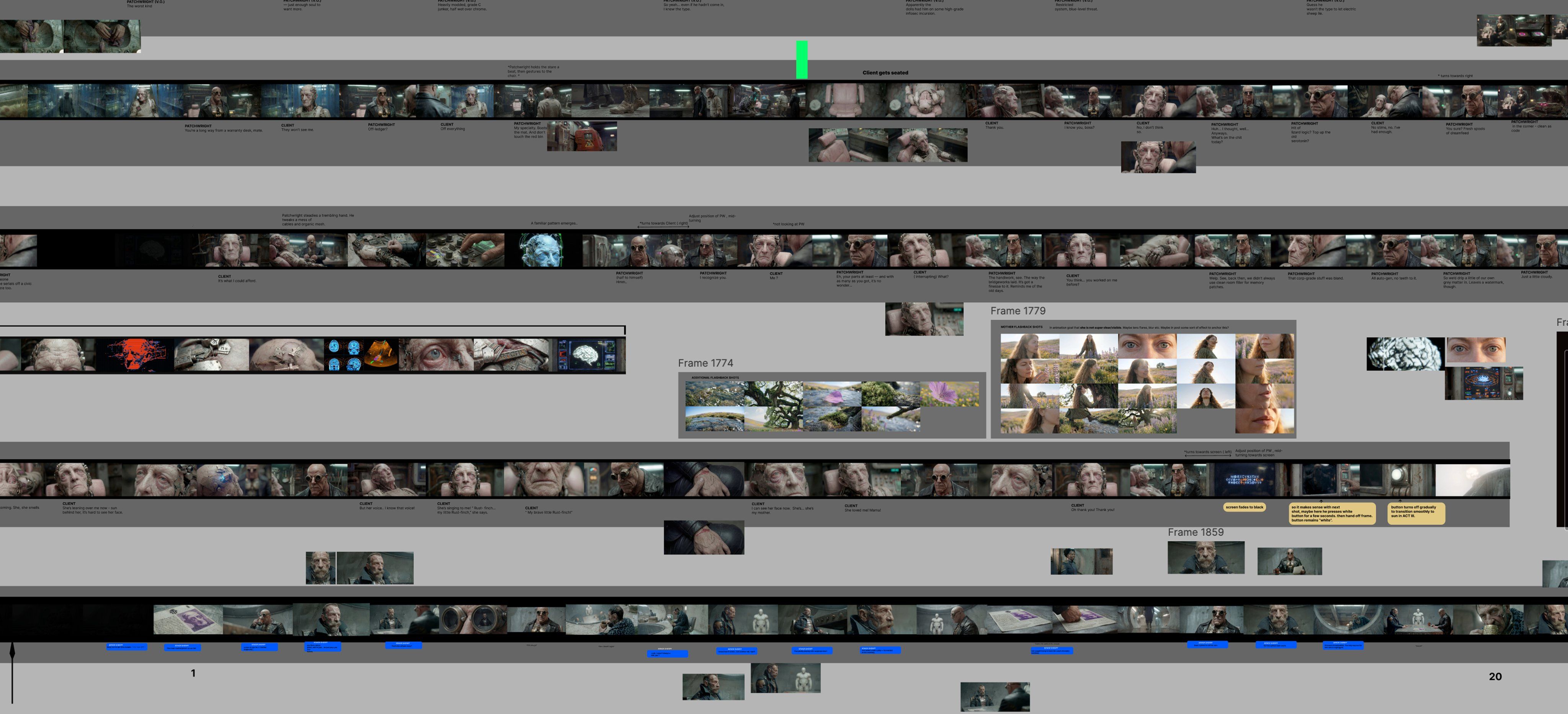

13/ The pre-nano-banana-pro era: literally painting on images

For the 9-minute interrogation room scene between two guys, the team was painting over images by hand.

Drawing arrows, marking cables, blocking beats.

Then feeding the marked-up image back to nano banana with notes like "show me again where the cable's protruding from there."

Hundreds of angles built that way, one at a time, back when no model could really do this natively.

14/ Voice: every single line is a real human

Every discernible line in The Patchwright is a real voice actor.

The Patchwright himself is voiced by an American, a former opera singer and ex-DJ, the same guy who voiced him in the original shorts.

They cast the female roles through a British casting website. Friends covered most of the random side characters.

ElevenLabs is fine if budget is the main constraint, but if you can swing real actors the performance comes through in a way synthetic voice still can't match.

15/ Kling did all the animations

For animations, The Patchwright is fundamentally a Kling film.

No other model came close to the level of detail, fidelity and control that Kling gave them throughout the grueling 4 month production.

For some speaking performances, he further supplemented the base animations and lip sync with Sync.

Sync Labs is finicky in real use. You have to splice the clips and the audio so the waveforms almost line up, then let Sync Labs extend the mouth movement a little.

16/ AI is wet clay

This was my favorite line from the whole conversation.

"AI is not going to behave the way you want. It's like wet clay. You understand the medium, you know how to manipulate it, but it has a mind of its own."

If you come from a traditional film background where everything on set is intentional, you'll fight this all day.

The work is more about giving the model the right inputs and being open to the happy accidents than directing every detail.

17/ The most underrated hire in any AI film team is a dedicated editor

Zach put it well. "What does it mean to be a director when everything is happening in silos? No set. No there there."

A director writes the script, builds storyboards, gives shot-level notes. But the cohesion of an AI film really happens in the edit.

An editor fluent in AI's specific failure modes might be the single most underrated role in this whole space.

18/ Color grade: bake 90% in, polish the last 10%

By the time animation starts, most of the grade is already inside the images.

The final grade is mostly unifying discrepancies between shots and bumping the muted sections.

One specific note from Zach worth flagging: no upscaling.

Every upscaler currently shipping exaggerates artifacts, oversharpens skin, smooths out the greebles, and corrupts the aesthetic. He doesn't touch them.

19/ Add grain.

Three years ago grain was a tool to hide AI artifacts.

Today Zach uses it because AI's default surface is just too clean and waxy.

The grain brings back some grit, fights the polish AI defaults to, and helps everything feel rooted in a real space rather than rendered into one.

20/ Sound is the glue

Zach pays real artists to score key sections of the film.

Custom SFX gets layered through to heighten the grime, the wet market textures, the workshop clanks, the foot traffic on the bridge.

AI music has gotten good enough to use in spots, but the combination of real composition, AI music, and custom SFX is what makes a sequence of disparate AI clips actually feel like one cohesive piece of cinema.

21/ The takeaway

Here's what I keep thinking about after this conversation:

Every AI filmmaker in the world has access to roughly the same tools. Same Kling, same Nano Banana, same midjourney.

The technical playing field is essentially flat.

So the question becomes: what are you building that no one else is?

Zach’s an incredible storyteller, but what lingers with me about this film is the formaldehyde creature on a shelf you barely register. The hand-designed mug.

The Burmese-derived subscript on a sign that's in frame for half a second.

Nobody asked Zach to design any of this. Nobody would've noticed if he hadn't.

But the reason the world feels real on screen is because somebody spent real time on the stuff that 99% of filmmakers would skip.

That wraps this breakdown!

Tomorrow I’m releasing my interview with Kavan to detail his process on Chronicles of Bone!

Let me know if you’ve been enjoying me interviewing other top directors!

It’s nice to get to promote their films and share their workflows with you 🙂

If you want to dig deeper into a community that helps AI filmmakers make a successful living, I’d highly encourage you to join Rourke Heath’s community:

I occasionally teach classes for free there, and in the absence of having a course myself, it’s really nice to send people to the best educator on the planet!

-PJ