- PJ's Newsletter - AI Filmmaking

- Posts

- You're using Seedance 2.0 wrong (try this massive time-saver instead)

You're using Seedance 2.0 wrong (try this massive time-saver instead)

I pillaged this workflow from vikings and the gates of hell.

I love writing newsletters because I can just cut out all the marketing bullsh*t and just give you guys the straight sauce with no filler.

I’ve got two crazy new workflows for Seedance 2.0.

This is about to be you over the course of the next 5 minutes:

Before we get into it, quick life update:

I’ve been co-working with the House of David (Wonder Project/Innovative Dreams) team at their Manhattan Beach Studios location.

It’s been so energizing to co-work with the best in the business, and I can’t wait to share more about some upcoming co-productions we’ve got in the works!

My studio, Genre, has a bunch of awesome commercials coming up, and I have an insane new sci-fi project, but they're still in progress, so I can’t share those workflows yet.

So after watching two crazy 20min episodes from the Higgs team, I asked them if I could interview their directors and break down how they made them so fast.

Big caveat: I don’t like the whole “made this in a few days” narrative anymore, it’s killing us when we have a major studio contact us and ask for an ad in “a few days”

(true story this week; and yes we can sometimes do it but FML)

But that aside, yes, you can use this workflow to create an insane film in a short period of time with a very small team.

This is in no way a sponsored post; it’s just a cool workflow!

It was refreshing to see other top directors share everything, and I’m able to share it with you, so massive shoutout to their talented teams and their openness to share this workflow.

HELLGRIND

If you haven’t seen the first film, Hellgrind, check that out here.

Phase 1: Pre-Production & Narrative

If you’re a fan of anime or Isekai, this series is for you. I spoke with 2 of the 5 directors for an hour and they said this project took the momentum from their previous work, Arena Zero and evolved it into a more complex narrative with higher stakes.

The Scripting and Treatment Phase

Before any generation began, the team spent about a time iterating on the script and developing a comprehensive director’s treatment.

Even though AI allows for "imaginary freedom" to change things on the fly, they started with a solid compound script that was split between four directors. This let them hit this crazy deadline by breaking the project into sections that each director handled individually.

Moodboarding in a vibe-coded collaboration app

To keep a team of four directors aligned, they vibe-coded an app called CollabHub a central hub for sharing character designs, location references, and visual inspiration.

They heavily utilized Higgsfield Soul Cinema to generate early mood board images because it allowed them to visualize unique elements - like the specific "Post-cyberpunk daytime" look - that don't exist in real-world reference photos.

This phase established the "Red and White" color palette that defines the film's visual identity.

Phase 2: Asset Development & Look Dev

Character Creation with Higgsfield Soul Cast

Every character in the film was developed using Higgsfield Soul Cast to give them both realism and a unique look.

The team focused on creating highly detailed character sheets that included front views, back views, and close-ups to maintain consistency.

These sheets even included specific props, like the characters' skateboards, to ensure the AI understood every element of their kit. Character sheets were generated for each character in a huge variety of emotional and physical states to keep consistency when generating with Higgsfield Seedance 2.0.

Mastering the Master Location

The team generated a single "master" image of the central museum in Higgsfield Soul Cinema, then used Nano Banana Pro to "spin the camera" and generate five distinct angles, including the notoriously difficult back view.

These were stitched into a single collage that served as the primary spatial and visual reference for Higgsfield Seedance 2.0, ensuring the building remained consistent across every cut.

To handle the story’s progression, they created three distinct versions of this master asset: the pristine initial arrival, a "destroyed" version for the battle, and a dark, moody version for the film’s dramatic climax.

Phase 3: Technical Pre-vis

Blender Blocking & Geospatial Mapping

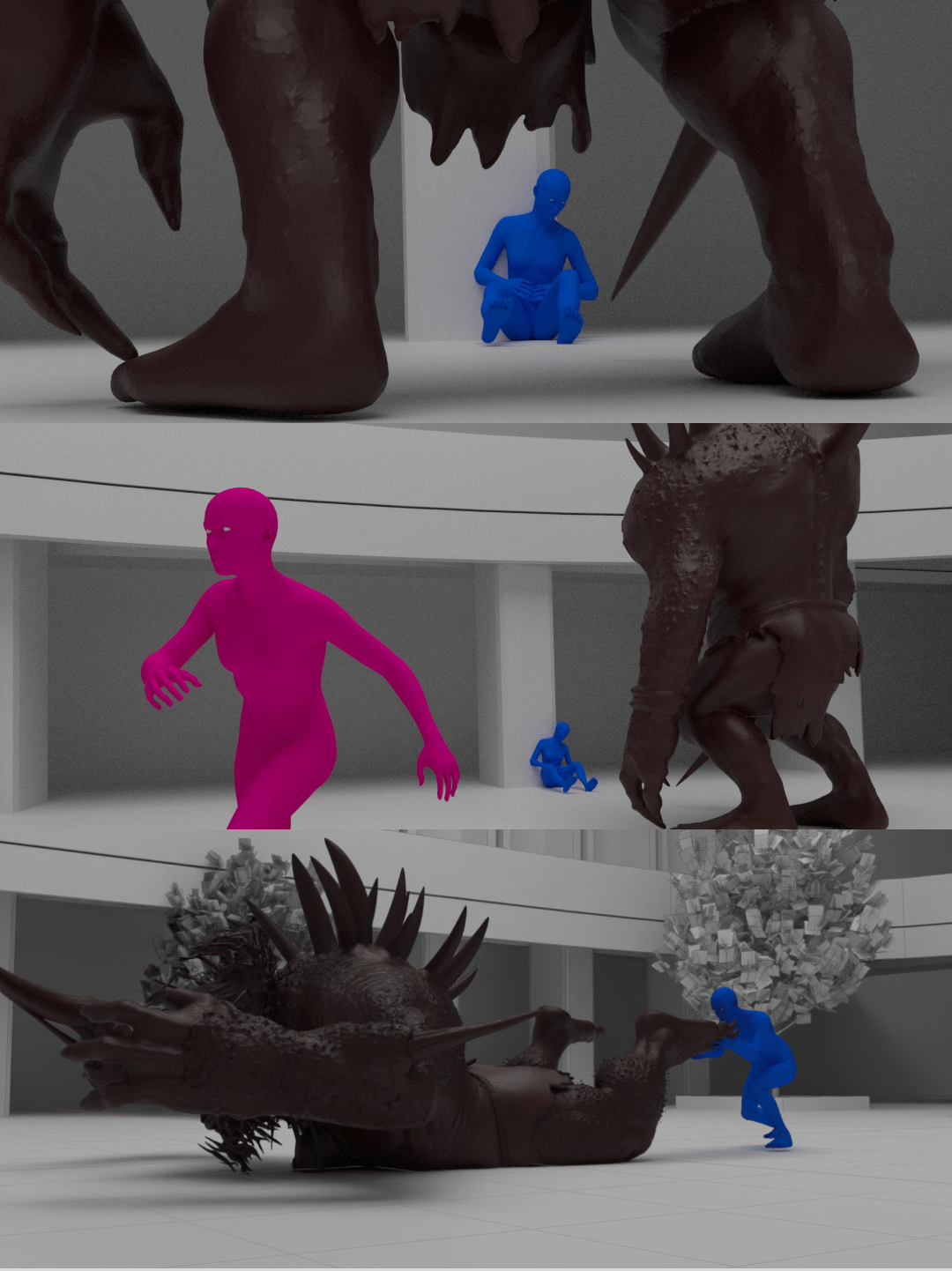

To solve the problem of complex character blocking, the team built low-poly versions of their locations in Blender. They placed simple colored shapes to represent characters (e.g., pink for Lulu, brown for the monster) and used these as a "spatial map." This allowed them to dictate exactly where characters were standing and moving in 3D space before hitting the generate button.

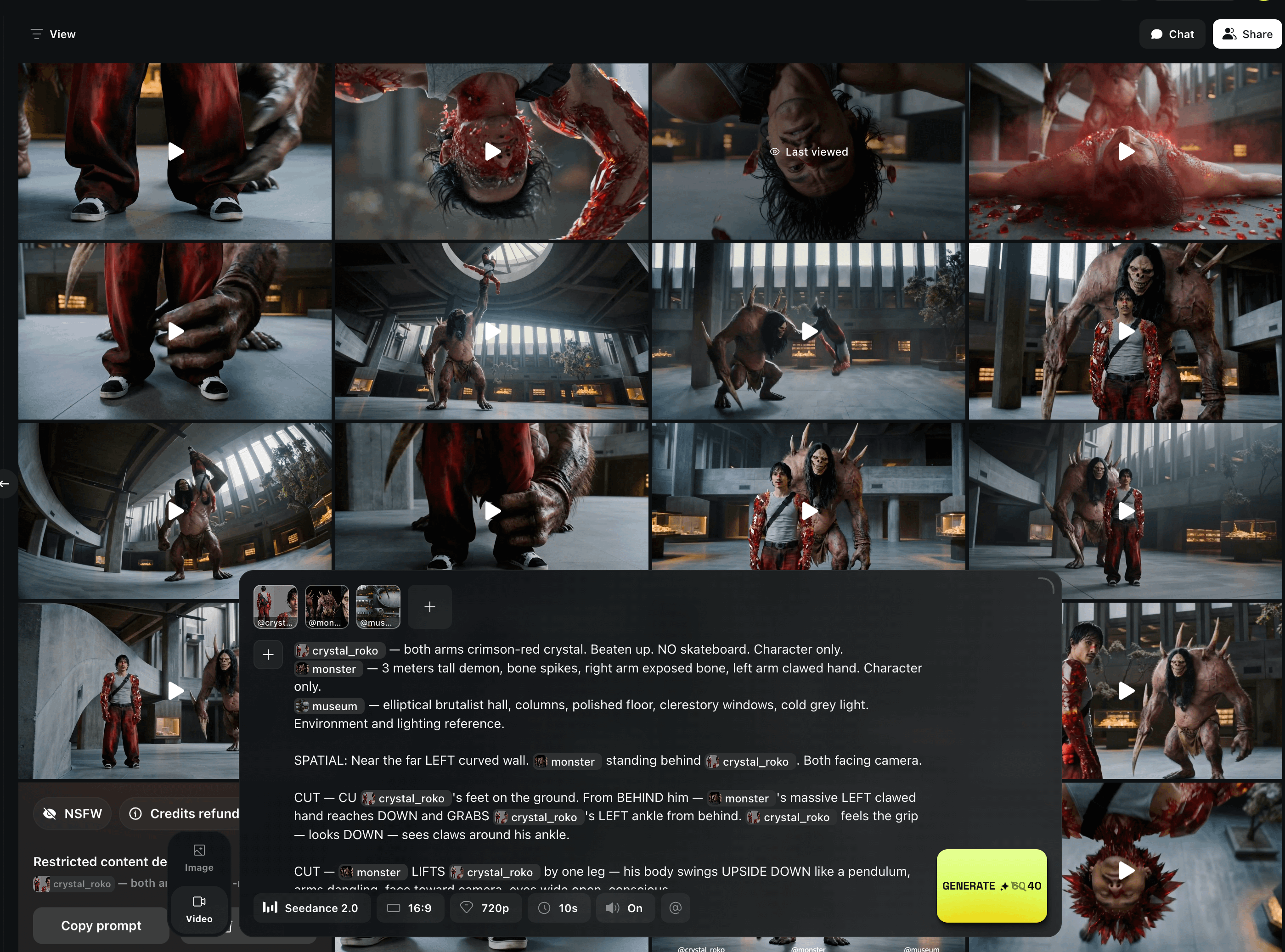

Claude as the Prompt Architect

The team used Claude to bridge the gap between their Blender storyboards and the final animation prompts. By uploading their spatial maps to Claude, the AI could generate precise instructions for Higgsfield Seedance 2.0 based on the script's requirements.

Phase 4: Production (The Generation Sprint)

Scene Coverage in Higgsfield Seedance 2.0

The bulk of the animation was done in Seedance 2.0, using 15-second segments to build out the scenes. The directors used a "context-first" prompting method, where the first prompt established the environment and positioning, followed by extreme close-ups for dialogue. Because they were generating at a high volume, they could pick the best 3-4 cuts out of every 20 generations to ensure the action felt intentional.

Dialogue and Pacing

To ensure the lip-sync and acting felt realistic, the directors intentionally added "pacing" and pauses between lines of dialogue.

If the dialogue is too crowded in a single prompt, the AI tends to speed up the performance, leading to "slop-like" movements.

By giving the characters room to breathe, they maintained a cinematic acting style that feels more human than automated.

(I was surprised to learn all performances were AI)

Pro tip: just give seedance audio from an actor’s performance and it’ll integration onto your character’s face

(i can’t share vids on beehiv, but click if you want to see it)

Voice Consistency

The team achieved voice consistency by including a short, one-sentence description of each character's voice in every single prompt (e.g., "Deep Japanese voice" or "25-year-old American accent").

Higgsfield Seedance 2.0 effectively assigned these descriptions to pre-generated voice profiles, keeping the characters' voices consistent across scenes.

For more difficult non-human characters, they relied on more iterative "random" generations until the right persona emerged.

Phase 5: Post-Production & Stitching

The Master Edit Workflow

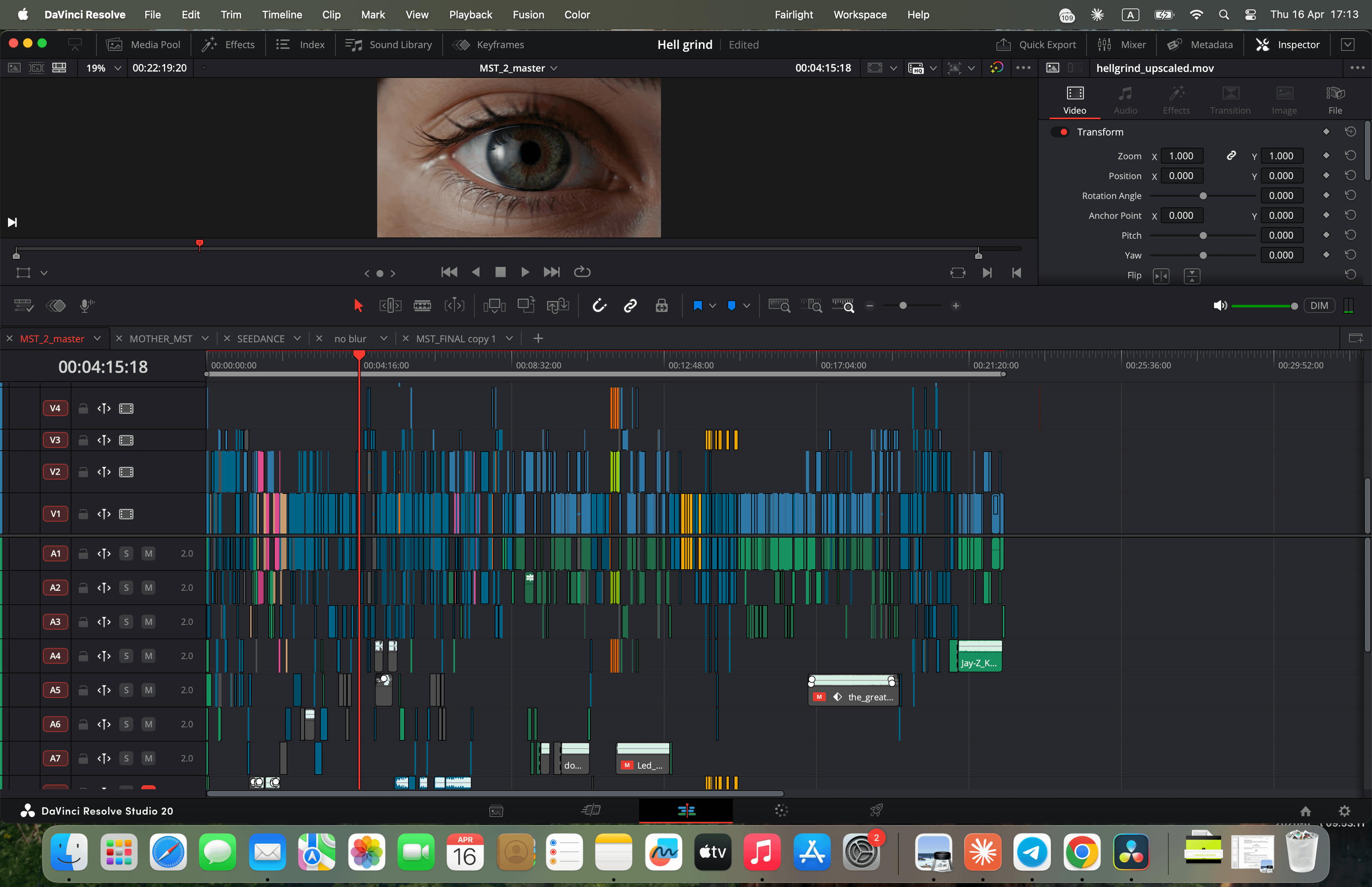

With five directors generating different scenes simultaneously, the team utilized a "Director of Editing" to manage the master file.

Each director built their assigned sequences on their own machines and sent XML files to the lead editor for final assembly.

This decentralized approach allowed them to generate 20 minutes of content in a tight timeline.

Final Polish and Color Grading

The final cinematic look was achieved in DaVinci Resolve, where the team focused on color matching the AI output.

They leaned heavily into "film-like" post-processing, adding grain, halation, and glow to soften the digital edges of the AI.

Rather than doing heavy VFX, they focused on these subtle photographic textures to make the final product feel like a high-end feature film.

Okay that’s a wrap on Hellgrind.

MORK

Here’s their next film, Mork.

Phase 1: Narrative & Strategy

Malik Zenger, a Cannes-winning music video director, joined the Higgsfield Originals team just three weeks prior with no previous AI experience.

He brought a traditional "old school" filmmaking lens to the project, focusing on narrative depth and realistic cinematography rather than just "cool" AI shots.

His goal was to prove that AI tools can deliver the same emotional weight as a high-budget live-action series like Game of Thrones.

The AI Writer’s Room

Despite having existing assets, Malik took the team back to a "writer's room" phase to develop a real screenplay.

Using Claude as a collaborative partner, he workshopped a revenge story that centered on a complex relationship between two brothers.

This shift turned a one-dimensional "action" piece into a full narrative season arc, complete with value shifts and character secrets.

Organizing the Universe in Figma

To manage the complex Viking world, Malik built a massive Figma board organized into "Scene Cards."

Each card detailed the scene name, the necessary characters, and specific location requirements.

This visual hierarchy allowed the team to track "beats" (like a child grabbing a wooden sword) to ensure the story's themes were visually established early on.

Phase 2: Visual Asset Development

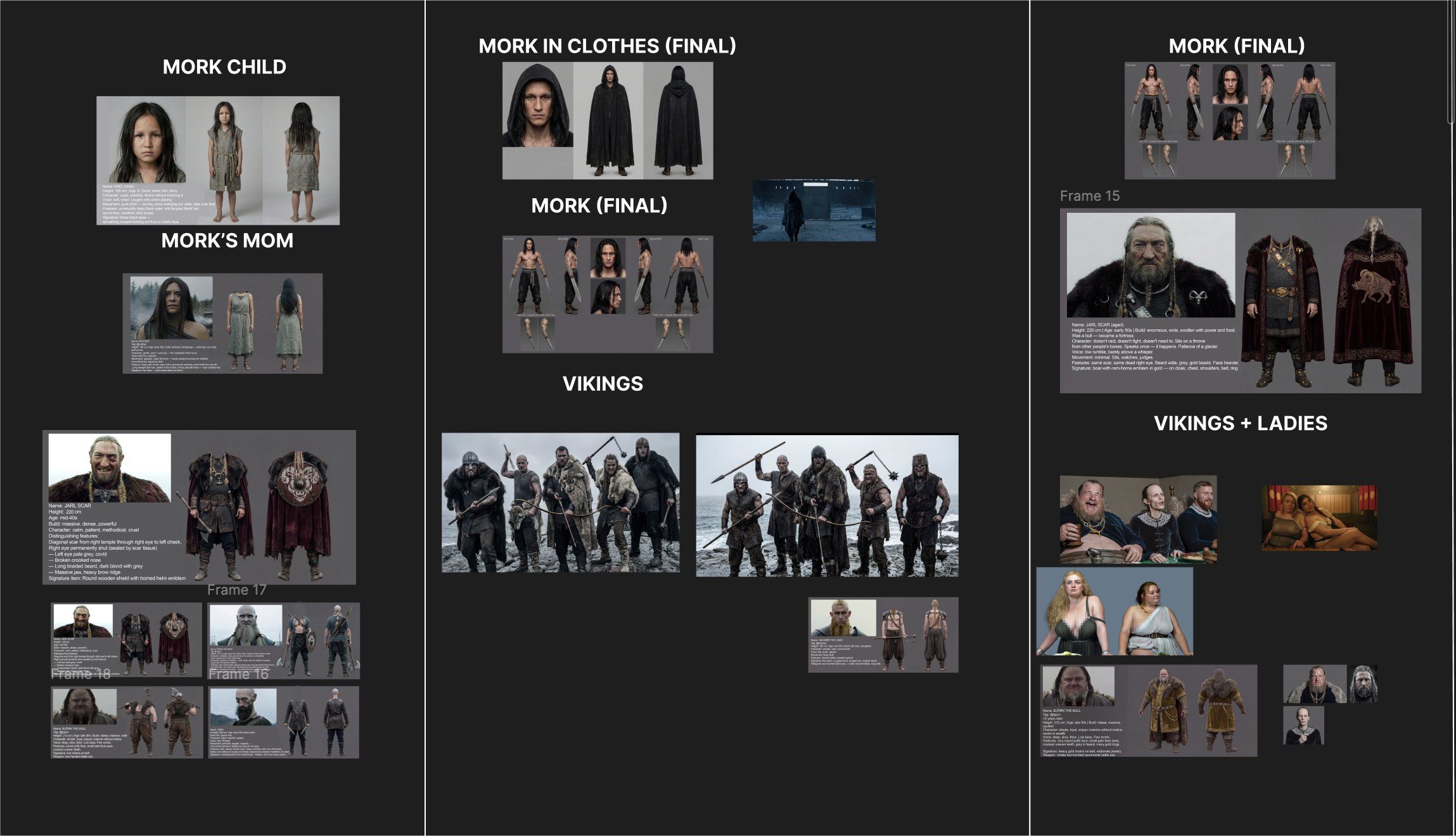

Character Architecture & The "Face-Blur" Hack

The entire character pipeline was anchored in Higgsfield Soul Cinema. Every shot in the film depended on this foundation.

Nano Banana Pro handled downstream detail refinement. Soul Cinema was the key weapon against the slop look.

Malik says it's the only model that can render the imperfect human face, which is exactly what kills the uncanny plastic feel.

One technical discovery proved essential: blurring the faces on wide-shot character reference sheets before rendering.

Left un-blurred, the model reads the miniature faces on the card and hallucinates exaggerated, cartoonish features onto the final video character.

Blurred, Higgsfield Soul Cinema's photorealism holds across every angle, lighting setup, and shot.

Extras and The Prop Library

The team didn't just focus on the leads; they built specific sheets for "Extras" (like tall, 2-meter-plus Vikings) and key props (like the Jarl's emblem).

By defining these assets as "Character Sheets" within Higgsfield Soul Cinema, the Seedance 2.0 model recognized them as permanent elements of the world.

This kept the Viking village feeling populated and logically consistent throughout the film.

Location Stress-Testing

Malik discovered that certain "beautiful" locations produced "sloppy" character animations.

He spent time stress-testing five or six different village environments generated in Higgsfield Soul Cinema until he found one where the characters moved with high realism.

In this workflow, the location isn't just a background—it’s a technical anchor that dictates the quality of the "acting" the AI can perform.

Phase 3: The Technical Pipeline

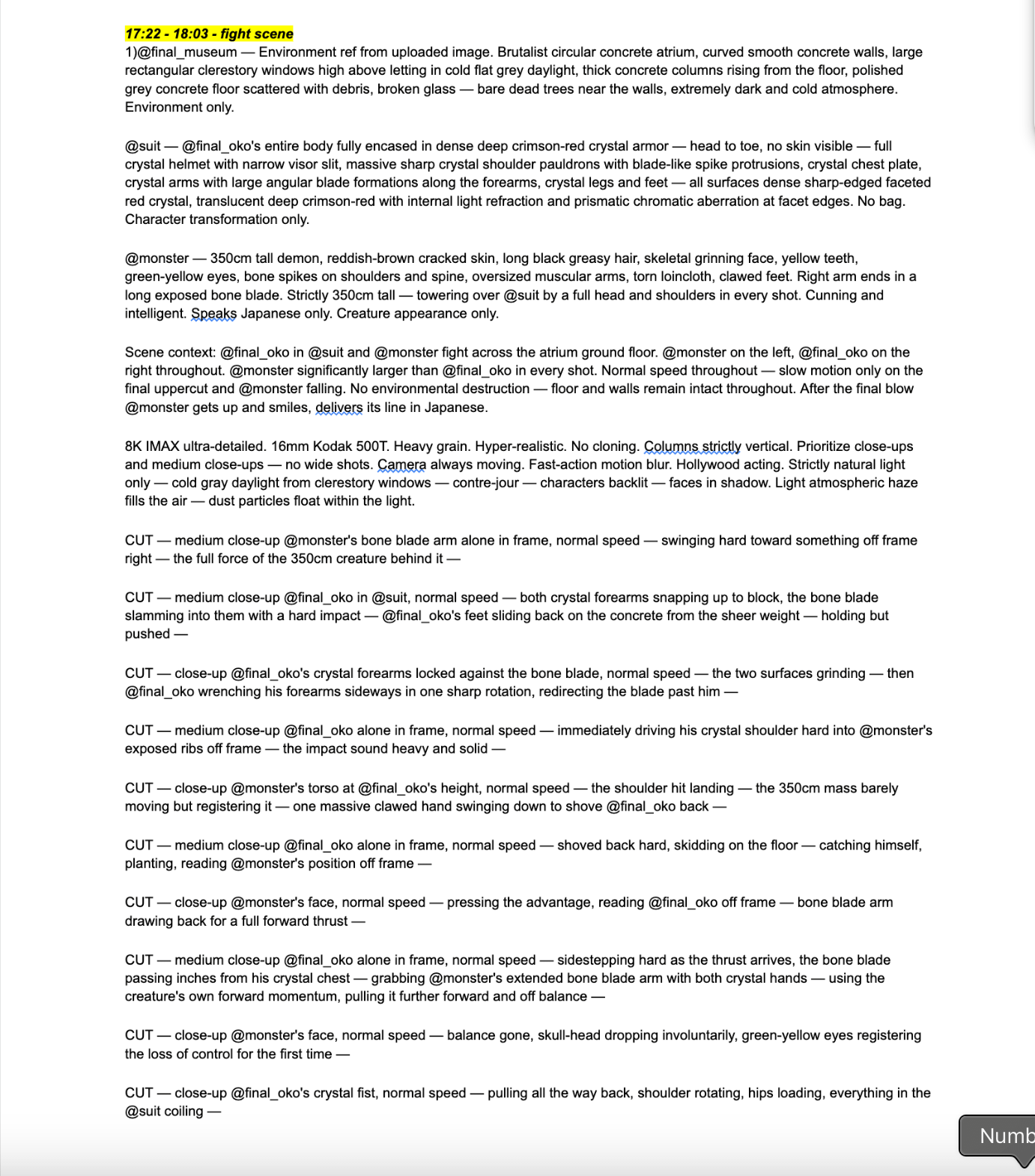

The Claude Prompt Architect

To bridge the gap between his directorial vision and the AI, Malik used a specific Claude pipeline. He would describe a cinematic shot (e.g., "Handheld, narrow grain, Mel Gibson's Apocalypto style") and Claude would translate that into a technical block for Seedance 2.0.

This ensured every prompt included specific camera information, like lens types and lighting cues, before the description of the action.

SCENE 93

FRAME 7A

KING’S HALL

— REFERENCE DEFINITIONS —

<<@ JARL>>>: Viking warlord — older now, greying beard longer, deep scar from forehead across left eye to jawline. Heavy fur cloak, chainmail beneath. Weathered, tired. Seated center of the long side of the table facing camera, in his throne — appearance only. Reference.

<<<@ BJORN>>>: Björn the Bull — massive, heavyset Viking warrior, thick neck, broad belly, long dark hair, grey in beard. Ornate golden-brown velvet tunic, fur mantle on shoulders, gold chains on belt. Seated to the right of the Jarl — appearance only. Reference.

<<<@ VIKINGS>>>: Five Viking noblemen — red-faced, drunk. Chainmail, fur, gold rings. Eating, drinking, flirting — appearance only. Reference.

@ women: Three women with full figures — fitted Viking dresses, laced bodices, braided hair, gold chains. Seated among the noblemen, pressed close — appearance only. Reference.

<<<@ KINGSHALL>>>: Viking great hall — vast dark stone chamber. Massive antler chandelier, candles. Large crimson red banner with emblem behind the throne. Cold grey-blue backlight from narrow windows. No fill light. Near-darkness. Long heavy feast table on raised platform with stone steps — the long side of the table faces camera, as seen in reference image. 10 people seated along one side — location and mood reference. Reference.

— TECHNICAL BLOCK —

Flat frontal fill lighting directly from camera position — even, soft, shadowless. Minimal contrast across face and body. No side light, no directional key light, no rim light, no dramatic shadows. The light should feel like a large softbox mounted directly above and around the camera lens, wrapping evenly around all surfaces. Neutral white light temperature.

Shot on ARRI Alexa Mini, Kodak Vision3 250D film emulation, subtle organic film grain. Natural skin with subsurface scattering, visible imperfections — pores, fine lines, uneven skin tone. Fabric texture captured naturally — rough weave, pilling, loose threads. Shallow depth of field with natural optical falloff on close-up view, full sharpness on full-body views. Clean silhouette edges, arms clearly separated from torso in full-body views. Consistent face, body proportions, clothing, and hair across all three angles. No text, no labels, no watermark, no other people, no props in hands, no colored lighting, no ornate decoration. No CGI look, no plastic skin, no airbrushing, no digital smoothing.

The image should feel like a behind-the-scenes wardrobe test photo from a Robert Eggers or Andrei Tarkovsky film — grounded, tactile, lived-in.

— PROMPT —

Wide establishing shot of @ KINGSHALL — vast dark chamber, columns in blackness, antler chandelier above, crimson banner behind the throne. The long feast table faces camera — its long side toward us, all 10 figures seated along it as in the reference. @ JARL center in his throne. @ BJORN to his right. @ VIKINGS and @ women spread along both sides — five noblemen and three women, 10 total. All in the middle of the feast. @ JARL chews slowly, silent. @ BJORN tears meat, mead in his beard. The rest — a tangle of eating and flirting. One nobleman whispers in a woman's ear, she smiles. Another laughs with his head back, goblet raised. A third argues across the table pointing with a drumstick. Two noblemen squeeze a woman between them, arms around her. The third woman is fed a piece of meat, she laughs. Everyone chewing, drinking, touching. The table a mess — roasted meat, bread, gold plates, goblets, spilled mead. In the foreground — a servant crosses frame with a tray, dark and out of focus. Camera holds then slow push-in toward the feast.

SFX only: the feast alive — overlapping laughter, goblets slamming, mead sloshing, meat tearing, chewing, men shouting, women laughing, whispering, plates clinking, drinking horn tilting, servant crossing — soft steps, tray clinking, fading. Candle flames guttering. The hall full of noise.

The "Oner" vs. The Cut

Unlike many AI creators who rely on 2-second fast cuts, Malik pushed for 15-second "Oners" (long, continuous shots).

He used prompts for "camera shake" and "whip pans" to create a visceral, handheld feel that puts the audience inside the fray.

This style mimics a real cameraman running through a village, which tricks the brain into forgetting the content is AI-generated.

Phase 4: Production Continuity

The Spatial Continuity Hack

To keep objects (like flipped tables or scattered food) in the same place across different shots, Malik used a screenshot-to-reference workflow.

He would use Seedance ingredients to animate a wide establishing shot, then take a screenshot of the "mess," and use that as the background reference for all subsequent medium shots and close-ups.

This ensured the "geography" of the room remained identical, even when the AI had total freedom to animate the characters.

You can also upload video footage of the last few seconds of your edit timeline to “continue” the shot and keep the same spacial consistency.

Dynamic Stage Progression

Similar to the Hellgrind (directors of another Higgsfield Originals piece) team, Malik evolved the locations through different phases.

He would take a screenshot of a "pristine" room, use it to generate a "battle version" with fire and chaos, and then use that as the new anchor.

This "baking in" of environmental changes allowed for complex, multi-stage storytelling within a single location.

AI Character Voices

Consistency in dialogue was achieved by baking voice descriptions directly into the character cards.

By describing a character as "British" or "speaking like an Irish Viking," Higgsfield Seedance 2.0 automatically assigned consistent vocal profiles.

Malik found that giving the AI more "freedom" within these descriptions resulted in more realistic acting than if he tried to over-constrain the performance.

Phase 5: The "New School" Edit

Real-time Generating & Editing

Breaking away from the traditional "shoot everything then edit" mentality, Malick moved to a real-time loop.

He would generate a shot, immediately drop it into the timeline to check the lighting and continuity, and then generate the next shot based on the previous one.

This "live" feedback loop allowed him to catch continuity errors (like lighting shifts) instantly, rather than days later in the edit suite.

—

The Emotional Secret Sauce: The BTS

The most viral part of Mort was actually the AI-generated BTS (Behind the Scenes). Malik purposefully generated "bloopers"—like AI actors holding coffee cups or microphones accidentally entering the frame—to "humanize" the non-existent cast.

By creating a fake reality where these characters are "real actors" with personalities, he created a deeper emotional bond with the audience than the primary narrative alone could achieve.

One more thing, off the record from Malick: the 22-minute Mort was the warm-up. He's already in production on a 90-minute episode. More to come!

This was a long post, but hopefully it helps you!

My workflow is pretty similar, but I was surprised at how little image generation they did. We do nearly every frame, but I can see how that would be too time-consuming.

On my sci-fi project, I’m doing a bit of a hybrid: I’ll create a couple of frames for each scene, then let Seedance generate 70% new angles based on those anchor images.

The character sheets were super helpful. I agree with the tip: you need a big close-up and “chop off their heads” for the front wide shot on the character sheet.

Let me know what filmmaker you want me to interview next! (Kavan, Gossip Goblin, etc.)

And stay tuned for some insane stuff from us soon!

-Peej